The Open Compute Project (OCP) is pleased to announce that NVIDIA is contributing its HGX-H100 GPU baseboard physical specification to the OCP Open Accelerator Infrastructure (OAI) Sub-Project. NVIDIA’s contribution of the HGX baseboard to the OCP Community will have a huge impact on the OAI Sub-Project’s ability to leverage HGX and develop cost effective, high efficiency, scalable platform and system specifications targeting high performance computing in AI and HPC applications. The OAI Sub-Project, part of the Server Project within OCP, will develop and define UBB (Universal Base Board) v2.0 physical design specifications based on the HGX specification.

The OCP Accelerator Module (OAM) design specification, first announced in March 2019 Global OCP Summit, defines the common mezzanine form factor specification for an accelerator module. Since then, OCP’s OAI Project was formed and extended the scope to define specification for infrastructure in AI and HPC domains. The OAI Project, with more than 30 participating companies, has been working on various specifications and contributed UBB v1.0 in June 2020 and UBB v1.5 specifications in February 2022.

Bill Carter, Chief Technology Officer for the Open Compute Project Foundation states, “The industry and ecosystem can’t move as quickly if we continue to have non-compatible options. 2.0 solves this. The OAM specification, along with the baseboard and enclosure infrastructure, will speed up the adoption of new AI accelerators and will establish a healthy and competitive ecosystem”.

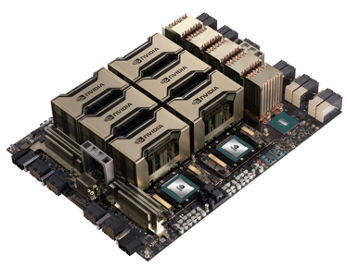

As shown in the above picture, HGX is a baseboard design by NVIDIA’s that hosts eight of the most recently announced Hopper GPU modules. HGX baseboard physical specification defines the physical dimension host interfaces (eg. PCIe connection to host board), managements, debug interfaces etc. The HGX contribution package includes baseboard physical specification, the baseboard input/output pin list and pin map, 2D/3D drawing. The contribution package can be found in the OCP OAI WIKI page.

NVIDIA’s HGX contribution satisfies all four tenets of the OCP of Scalability, Openness, Impact and Scale.

- Efficiency - This is the 3rd generation HGX baseboard form factor, and each generation has provided ~3x-5x higher AI Training throughput, while consuming similar active power. As a result, the HGX baseboard is now the de facto standard, with most global hyperscalers designing around this form factor for AI training and inference.

- Scalability - Globally, hundreds of thousands of HGX systems are in production. The systems and enclosures for this form factor will be stable, and built at scale. The HGX specification will include physical dimensions, management interfaces for monitoring/debugging etc. for at-scale services.

- Openness – HGX-based systems are the most common AI training platform globally. Many OEMs/ODMs have built HGX-based AI systems. With the contribution of this HGX physical specification, the OAI group will converge UBB2.0 with the HGX spec as much as possible. OEMs/ODMs could leverage the same system design with different accelerators on UBB/HGX-like baseboards, such as same baseboard dimension, host interfaces, management interfaces, power delivery, etc.

- Impact - The NVIDIA HGX platforms have become the defacto global standard for AI training platforms. Opening this HGX baseboard form factor allows other AI accelerator vendors to leverage the HGX ecosystem of systems and enclosures. This should accelerate other AI vendors being able to leverage existing infrastructure and adopt their solutions sooner. This further accelerates innovation in the AI/HPC fields.

OCP is looking forward to the outcomes of the contribution in next generation UBB v2.0 and benefit the industry by leveraging the OAI infrastructure, accelerating the innovation.